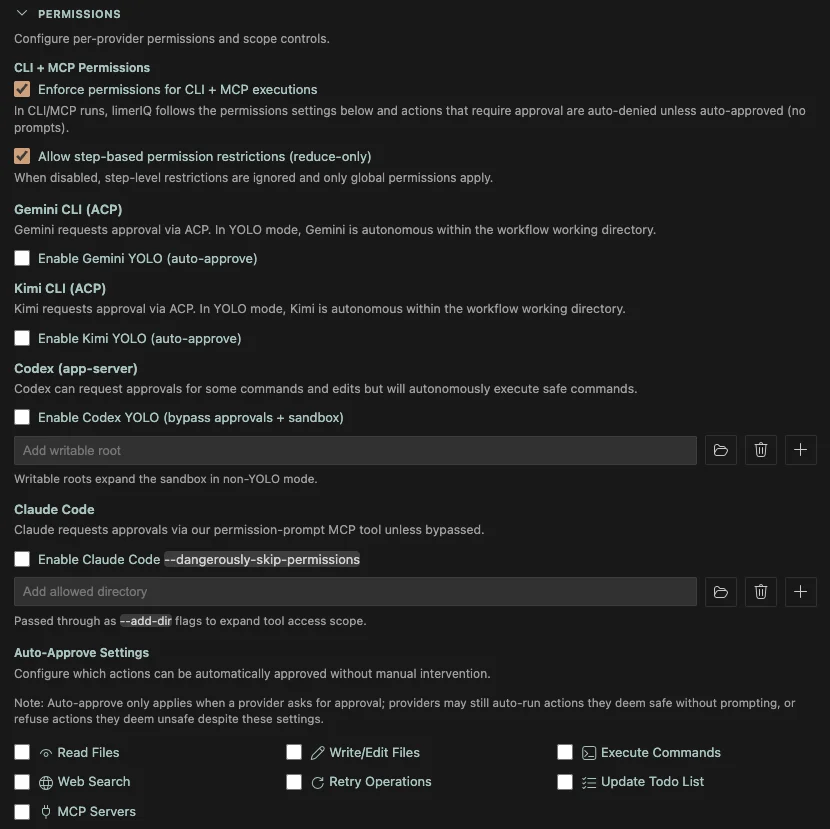

A single checkbox called "danger mode" is not a safety model. limerIQ now brokers approvals in the terms each provider actually understands.

One of the easiest mistakes in AI tooling is flattening safety into a fake abstraction.

Everything gets reduced to a couple of global toggles:

- safe

- unsafe

- allow all

- ask me first

That might look clean on a settings page, but it falls apart the moment you try to govern real execution across different providers.

Claude, Codex, and Gemini do not expose the same permission surfaces. They do not request approval in the same way. They do not define working boundaries in the same way. A system that pretends otherwise usually ends up doing one of two bad things:

- it becomes so generic that the controls stop meaning much

- it quietly bypasses the native model and calls that simplification

We did not want limerIQ to do either.

The Principle: Native Parity

The right rule here is simple:

limerIQ should be at least as good as each provider's native permission and sandbox model, never worse.

That means the governance layer cannot just paper over the differences. It has to respect them and broker approvals in a way that still maps cleanly to the underlying runtime.

This is one of those architectural choices that sounds subtle until you feel the alternative.

If the permission model is fake, trust evaporates fast.

Users stop knowing what "approved" means.

Teams stop knowing what "sandboxed" means.

Headless execution becomes especially dangerous because the abstraction is doing guesswork instead of policy.

What This Looks Like in Practice

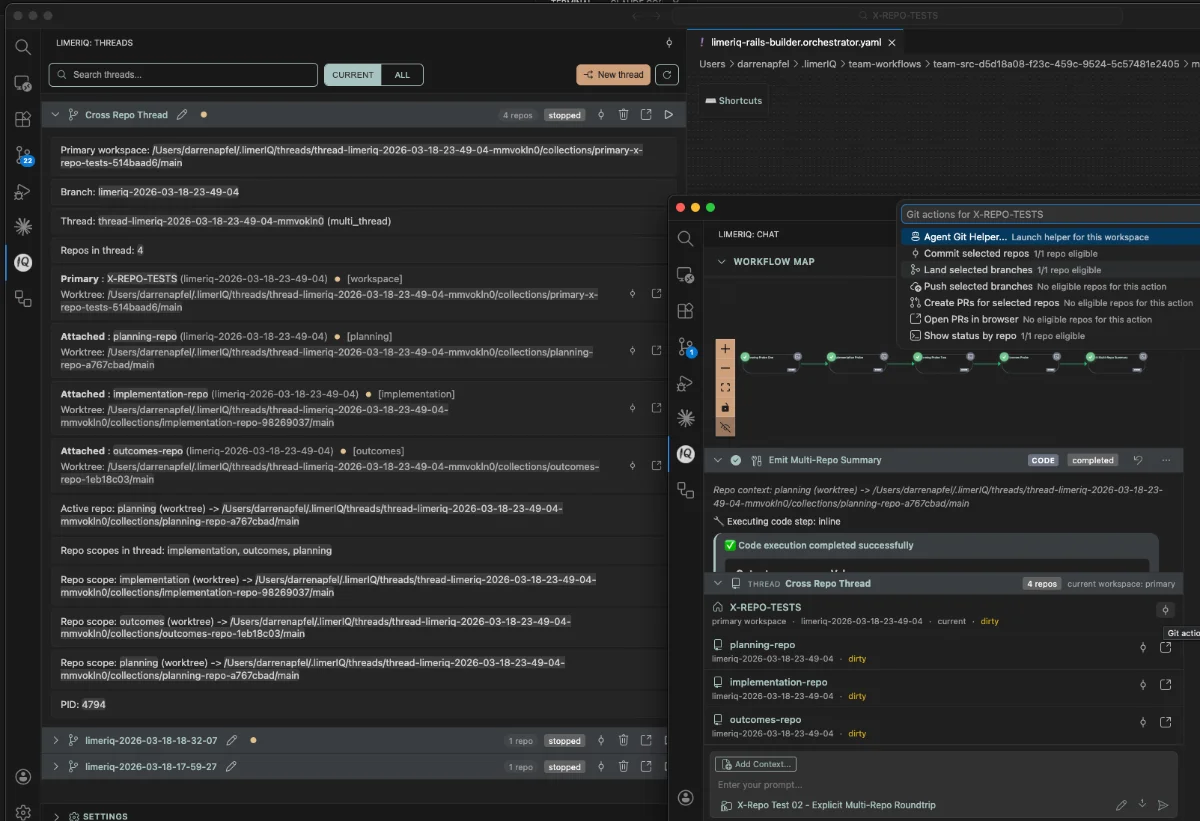

limerIQ now handles provider permissions through a central broker, but the broker does not erase the provider.

For Claude, limerIQ can route approvals through Claude's permission prompt tool path.

For Codex, limerIQ uses the app-server integration so Codex can request approval for commands and file changes in a way that lines up with its native sandbox posture and writable-root model.

For Gemini, limerIQ uses ACP so filesystem and tool permission requests still respect Gemini's cwd-scoped model instead of inventing a fake workspace abstraction on top.

That is the right shape.

The product gets a unified governance layer, but the provider-specific safety semantics are still real underneath.

Why Headless Enforcement Matters So Much

This feature becomes even more important once workflows run headlessly.

In a watched interactive session, a sloppy permission abstraction is annoying. In a headless run, it can be actively dangerous.

That is why limerIQ defaults to strict headless enforcement. If a headless run reaches an action that would normally require approval, the system denies it unless it matches auto-approval rules.

No fake prompt.

No invisible "well, maybe it is fine."

No unattended workflow trying to negotiate with itself.

That is exactly how the model should behave.

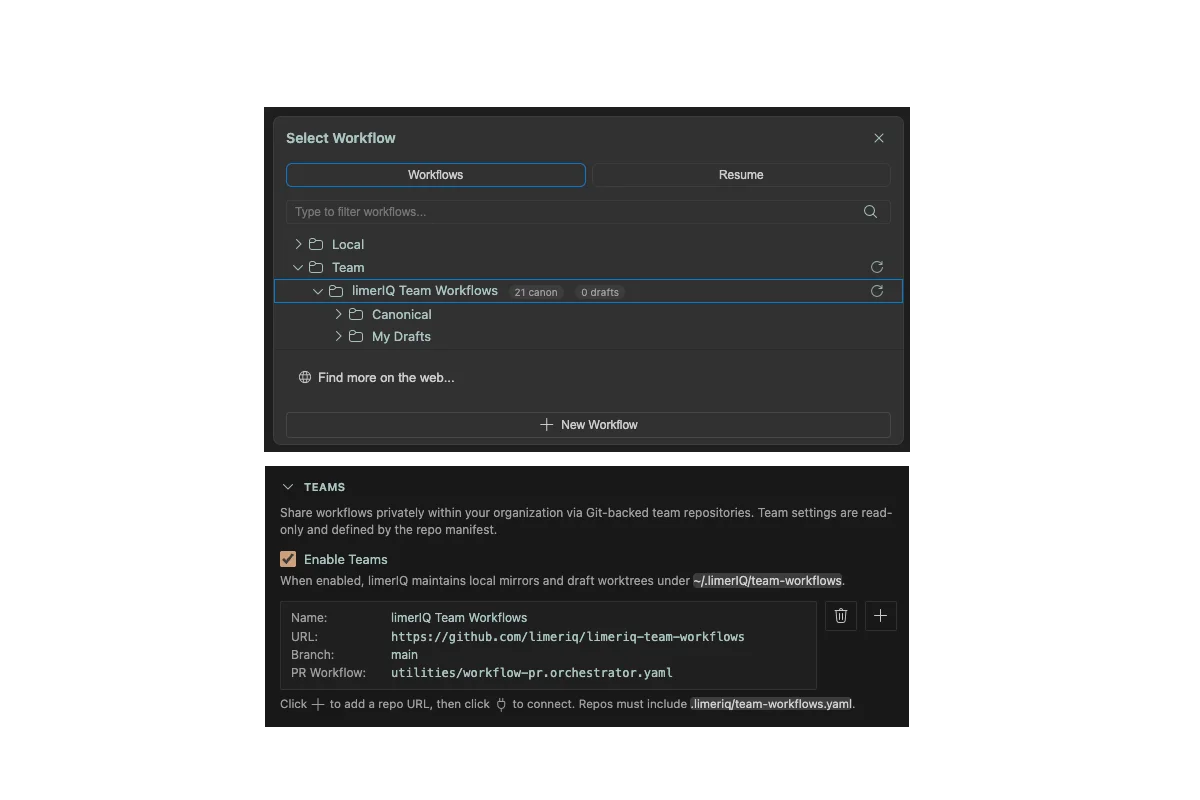

If a team wants caller-controlled headless approval policies, they can opt into that deliberately. But governance should only loosen when someone explicitly says so.

A Permission System Is a Product Claim

This is easy to miss, but permission handling is not just a technical concern. It is part of the product's credibility.

When a tool says:

"You can govern AI execution across providers,"

what people really hear is:

"I can trust your control surface when the runtime is doing something consequential."

That promise is only believable if the permission system is grounded in the actual provider behavior.

Otherwise the whole thing is theater.

This Also Makes Multi-Provider limerIQ Stronger

The new permission model also reinforces one of limerIQ's best structural advantages: multi-provider execution.

It is one thing to route steps across different models.

It is another to do that without flattening the safety model into nonsense.

The native-parity approach makes the multi-provider story much stronger because it says:

- yes, you can orchestrate across Claude, Codex, and Gemini

- no, you do not have to accept a pretend permission layer to do it

That is the kind of detail people may not notice on a first pass, but it matters a lot once the workflows become operational.

Why This Belongs in the Relaunch

This was not on the original feature list people would instinctively lead with, but it should be part of the story now.

Because once limerIQ is positioned as the governed execution layer for AI-native software delivery, the permission system stops being a support feature and starts being a pillar.

If the workflow is allowed to write code, execute commands, touch multiple repos, and run unattended, then the approval model is part of the product's core truth.

It cannot be fake.

It cannot be hand-wavy.

It has to map to real provider behavior and real runtime policy.

That is what this release moves us toward.