We built limerIQ first as a better way to orchestrate coding agents for individuals. This release turns it into something broader: a governed execution layer that lets teams define how AI work should run, what counts as done, and what proof survives after the run ends.

AI made code abundant.

That is no longer the hard part.

The bottleneck moved downstream: requirements that do not stay attached to the work, pull requests that arrive faster than reviewers can clear them, tests that do not prove much, infra changes that need real scrutiny, and AI runs that are impossible to trust once the chat window is gone.

That is the backdrop for this release.

The old framing of limerIQ was directionally right, but too small. Yes, limerIQ helped individuals get better results from Claude Code, Codex, Gemini, Kimi, and the rest. But the thing we have actually been building is larger than "better prompting" and more durable than "workflow automation."

limerIQ is becoming the governed execution layer for AI-native software delivery.

That phrase matters, because it changes what the product is for.

We are not trying to be another chat wrapper, another prompt library, or another dashboard for watching agents stream tokens in a prettier box. We are trying to give teams a way to encode how software delivery should happen, run that process across real repositories and real environments, and verify that the work met the bar before it moves forward.

In practice, this release adds the pieces that make that claim credible.

The Product Shift

The new limerIQ is built around a simple idea: if AI is going to participate in software delivery, the execution itself has to be governable.

That means:

- the workflow has to define the path, not the agent's mood

- steps need enforceable outputs, not polite suggestions

- work has to cross repositories intentionally

- concurrent execution needs isolation

- unattended runs need safety controls

- delivery needs evidence, not just logs

- team standards need a canonical home

That is the common thread behind what shipped.

What Shipped

1. Agent guardrails

The biggest shift is that limerIQ can now enforce far more than "did the step return a string."

You can define required outputs, expected file actions, retry loops for incomplete work, JSON-schema checks, and code-based compliance checks. In other words, you can tell a workflow what "done" actually means and have the runtime verify it.

Read more: Agent guardrails that fail closed

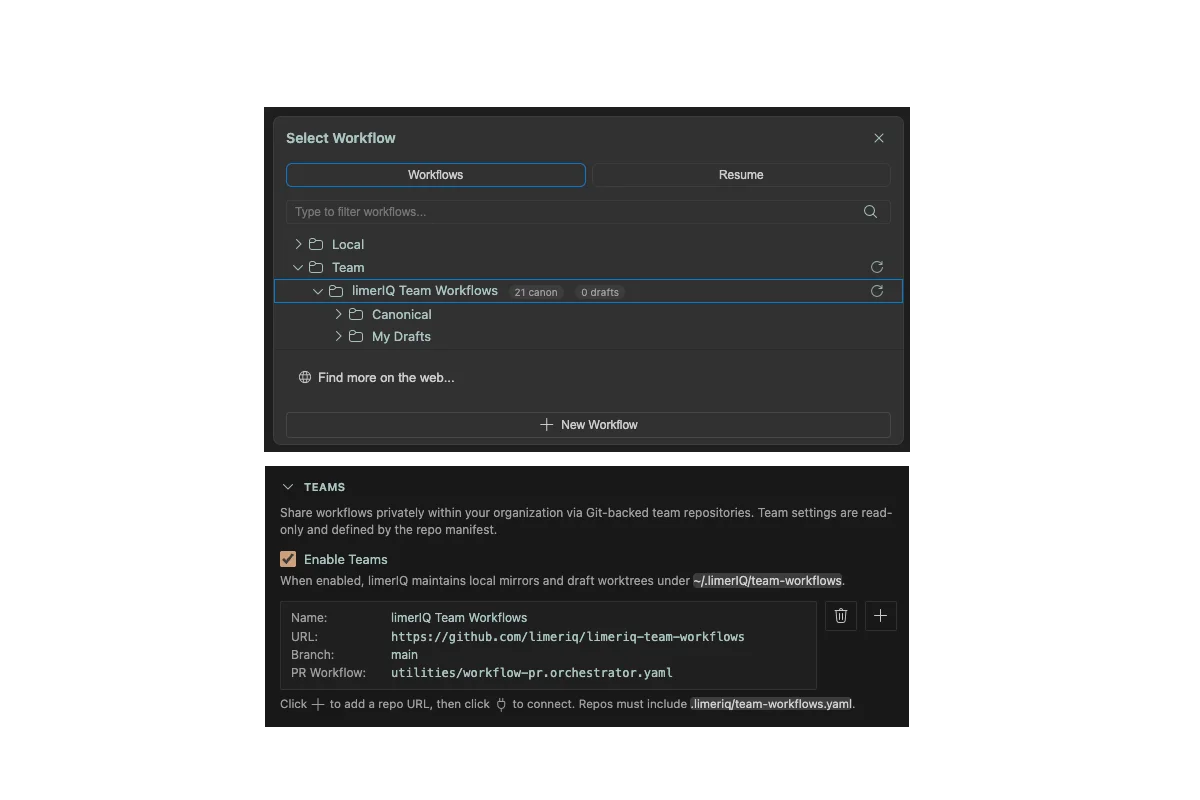

2. Team workflow repos

The marketplace is still important, but shared execution inside a company needs a different shape. Teams need canonical workflows, version history, review, and a clean path from local experimentation to governed team updates.

That is what team workflow repos are for.

Read more: A workflow repo for the team, not a pile of personal prompts

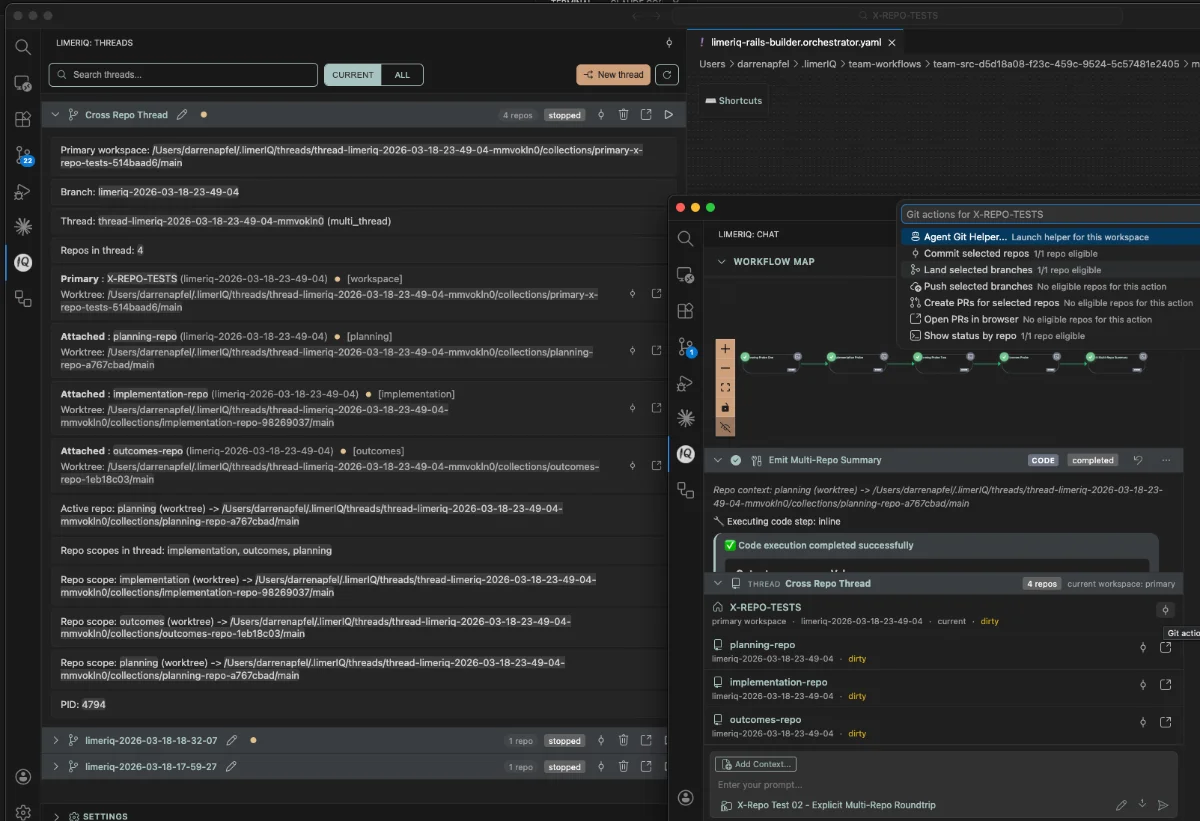

3. Cross-repo orchestration

Real delivery crosses boundaries. Product intent lives in one repo, implementation in another, infrastructure in a third, and release plumbing somewhere else entirely.

limerIQ can now route steps and even chained workflows across repositories on purpose, with explicit repo scopes and policy checks before execution starts.

Read more: Software delivery is cross-repo. Your AI workflows should be too.

4. Threads and worktrees

We also needed a better execution model for concurrency.

The answer is Threads: a user-facing execution context that can own one primary workspace and attach additional repo worktrees as the workflow expands. Git worktrees are still the primitive, but the product surface is now much clearer and much more scalable.

Read more: Threads: one execution context, many isolated worktrees

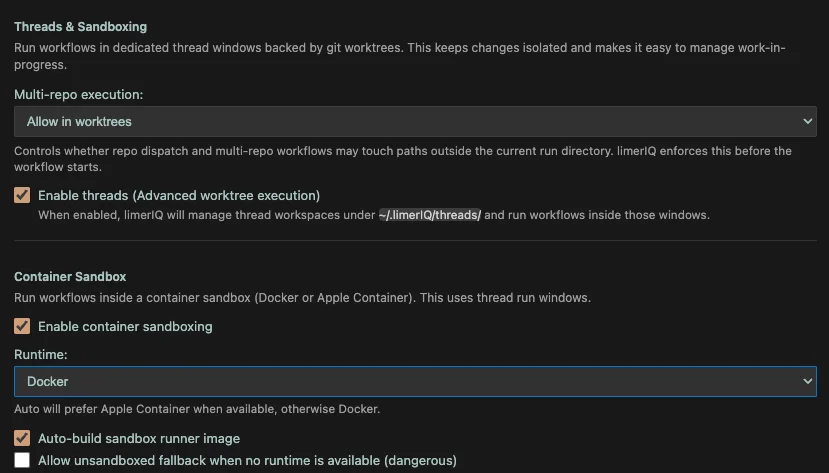

5. Sandboxed execution

Autonomy without isolation is a bad trade.

limerIQ can now run workflow worktrees inside a sandboxed container environment with Docker or Apple Container, while still handling provider authentication, mounted paths, and retry flows in a way that is practical for real teams.

Read more: Run the workflow in a sandbox, not on faith

6. Execution provenance

We needed a better term than "evidence trails," and the one that fits best is probably execution provenance.

This release adds a trust chain made up of evidence bundles, proof-of-execution verification, and attestation generation. The point is straightforward: a workflow should be able to prove what happened, what artifacts were produced, and what run or commit they belong to.

Read more: Execution provenance, not vibes

7. Headless and autonomous execution

limerIQ is no longer just something you drive from the IDE.

There is now a real headless runner story: queue processing, autonomous execution, approval routing, reporter pipelines, deployable runner flows, and a path to embedding limerIQ in CI, scheduled jobs, and other automation.

Read more: When limerIQ leaves the IDE

8. Provider-native permissions

One of the quieter but more important changes is the move toward provider-native permission handling. Governance fails fast if the permission model is fake, leaky, or flattened into a lowest-common-denominator abstraction.

limerIQ now brokers approvals in a way that respects the real permission surfaces of Claude, Codex, and Gemini, including stricter behavior in headless runs.

Read more: Governance is not real if your permission model is fake

Why This Repositioning Matters

The easiest way to misunderstand AI in software is to focus on code generation as the center of gravity.

It is not.

Code generation is important, but once it becomes cheap and ubiquitous, the scarce thing is confidence:

- confidence that the right work got done

- confidence that changes stayed in bounds

- confidence that tests and checks actually ran

- confidence that cross-repo execution did not drift

- confidence that an unattended run did not quietly invent its own rules

- confidence that a team can reuse a workflow without inheriting chaos

That is what governance is really about here. Not bureaucracy for its own sake. Not "enterprise features" bolted onto an otherwise consumer product. Governance in the literal sense: defining the process, enforcing the path, and preserving the receipts.

This is also why the new homepage copy centers trust, throughput, and the governed path. The market no longer needs another argument that AI can write code. Everyone believes that now. The harder and more useful problem is making AI-generated work trustworthy enough to move through a real delivery system.

What Comes Next

This release does not finish the story. It starts a cleaner one.

There is still more to build around hosted control plane workflows, deeper team operating models, and broader automation surfaces. But the center of the product is now much sharper than it was before.

limerIQ is not just for getting a better answer from your favorite coding model.

It is for defining how AI work should execute across your software delivery lifecycle, and making that execution governable.

That is the product now.