If a workflow changed code, ran tests, and claimed success, the interesting question is not what it said. The interesting question is what evidence survived.

We needed a better umbrella term for this part of limerIQ.

"Evidence trails" is close.

"Provenance" is close.

"Trust chain" is close.

The phrase I keep coming back to is execution provenance.

Because the real feature is not just "we saved some logs." The real feature is that a workflow run can now leave behind a structured chain of evidence about what happened, what artifacts were produced, and what that execution can be tied back to later.

That is a much more useful thing.

Why Logs Are Not Enough

The standard AI tool answer to accountability is usually some version of "we keep a transcript."

That helps, but it is not the same as provenance.

A transcript tells you what the model said.

It does not necessarily tell you:

- which artifacts were actually produced

- whether those artifacts match what the system registered during the run

- whether the evidence bundle was tampered with later

- which commit, PR, repo, or workflow name the run belongs to

That is why we added a proper trust chain to limerIQ instead of pretending a chat log is an audit system.

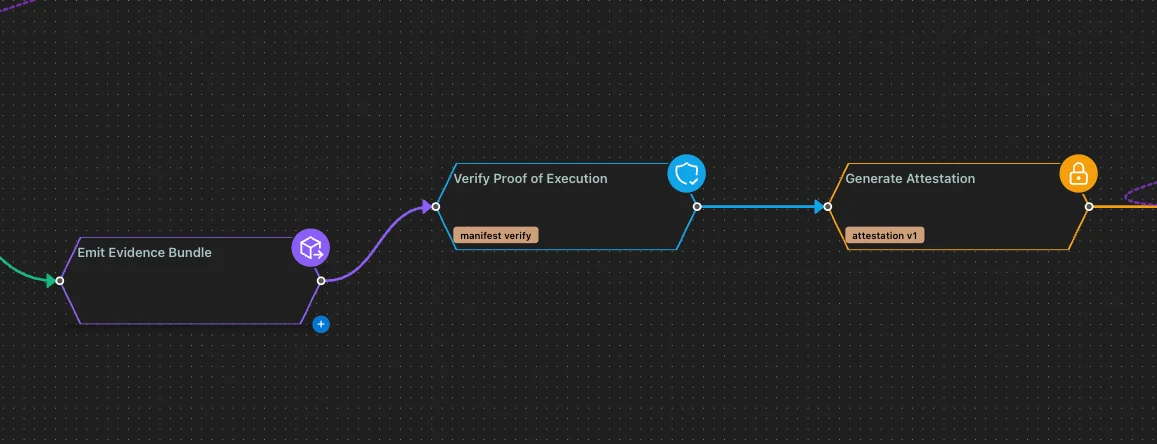

Step One: Emit the Evidence Bundle

The first trust step is emit-evidence-bundle.

This step generates a manifest and summary for the run based on the run ledger, artifact registry, metering ledger, and lineage data. In plain English, it turns the execution into a concrete evidence package instead of a pile of loosely related outputs.

That bundle is not just a text note saying "tests passed." It is a structured manifest of what the run produced and how the runtime saw it.

The step also computes a hash of the manifest content. That matters because once evidence is part of the workflow, integrity matters too.

This is the point where the workflow starts leaving behind receipts instead of commentary.

Step Two: Prove the Execution

The second trust step is proof-of-execution.

This step verifies that the evidence manifest exists, parses correctly, matches the expected schema, and aligns with the artifact registry. If the manifest says an artifact exists with a specific fingerprint, the verification step checks whether the registered artifact actually matches.

That is the crucial move.

Without verification, an evidence bundle is just a nicer report.

With verification, the workflow can detect missing artifacts, mismatched fingerprints, or a manifest hash that does not line up with what should have been produced.

That is where provenance becomes a control, not just a document.

Step Three: Generate the Attestation

The final trust step is attestation-generator.

Once the evidence has been emitted and verified, limerIQ can generate an attestation bound to the manifest hash. That attestation can also carry useful execution context like:

- run ID

- commit SHA

- PR number

- repository

- workflow name

In other words, the system is not only preserving what happened. It is binding that evidence to the delivery context the run belongs to.

That is a much stronger handoff for review, release, compliance, or post-run investigation.

Why This Feature Exists

There is a broader product reason this feature matters.

Once AI enters software delivery, the hardest question is not "can the agent write code?"

It is:

"What can we prove about the run after the agent is gone?"

If a workflow proposed a fix, ran validation, updated files, and declared success, teams need more than a vibe-based answer. They need artifacts, hashes, and a chain they can inspect later.

That is especially true for:

- release gates

- CI triage

- PR review workflows

- regulated environments

- any workflow that is going to run headlessly

The more autonomous the run is, the more important the provenance becomes.

This Is Also About Product Maturity

Adding trust-chain steps changes the kind of system limerIQ is becoming.

A lot of AI products want the emotional benefit of trust without paying the architectural cost. They talk about confidence, quality, or reliability, but when you look closely the evidence is still mostly narrative.

We wanted something stricter.

The evidence step should generate actual artifacts.

The verification step should check actual integrity.

The attestation step should bind the run to actual delivery context.

That is the right sequence if you are serious about governed execution.

The Term We Should Probably Use

If you are looking for a cleaner product term than "evidence trails," I think the best candidates are:

- execution provenance

- trust chain

- attested execution record

My bias is toward execution provenance as the umbrella and trust chain as the internal pattern.

Why?

Because "provenance" captures the lifecycle question people actually care about: where did this result come from, what produced it, and what evidence supports it?

That is exactly what these steps are designed to answer.

Why This Belongs in the Relaunch

This feature is one of the clearest expressions of the new limerIQ.

It says we are no longer content with a workflow that merely looks disciplined. We want a workflow that can leave behind evidence of disciplined execution.

That is a different standard.

It is also the standard you need if limerIQ is going to be the governed execution layer for AI-native software delivery.

Because governed execution is not only about controlling the path.

It is also about preserving the proof.